Published: March 10, 2026

The AI video generation landscape has evolved dramatically, but one challenge has persisted: creating video that looks and feels physically real. With the release of ltx 2.3, that barrier is finally breaking down.

In this post, we'll explore the key innovations that make ltx 2.3 a generational leap in AI video creation — and what it means for creators, marketers, and developers.

The Problem With Previous AI Video

Earlier AI video models suffered from what the industry calls "hallucinations" — characters with floating limbs, gravity-defying objects, and faces that morph between frames. These artifacts made AI-generated video look impressive in demos but unusable in production.

The root cause? Most models treated video as a sequence of loosely connected images rather than a coherent simulation of the physical world. Each frame was generated with only minimal awareness of the frames before and after it, resulting in temporal inconsistency that the human eye immediately picks up on.

For content creators, this meant spending hours cherry-picking usable segments from generated output. For marketing teams, it meant AI-generated video was relegated to internal brainstorming rather than customer-facing campaigns. And for developers integrating AI video into products, it meant constant post-processing pipelines to clean up artifacts.

How ltx 2.3 Solves This

ltx 2.3 addresses these fundamental limitations through three architectural breakthroughs that work in concert. Rather than applying band-aid fixes to existing approaches, the team rebuilt the generation pipeline from the ground up.

Physics-Aware Motion Core

ltx 2.3 is trained on real-world biomechanics and physics data. This means the model understands how gravity, inertia, and light reflection actually work in the real world.

When you generate a scene of a person running, ltx 2.3's physics engine ensures that:

- Feet strike the ground with proper weight transfer, creating realistic impact dynamics

- Clothing reacts to body movement with accurate cloth simulation and wind resistance

- Hair follows natural motion arcs based on movement speed and direction

- Shadows move consistently with the light source throughout the entire sequence

- Environmental elements like leaves, water, and particles respond to subject interactions

This isn't a filter applied on top — it's baked into the architecture at the deepest level. The model learned physics by observing millions of hours of real-world footage, developing an implicit understanding of how objects interact in three-dimensional space.

What makes this particularly powerful is the consistency across long sequences. Previous models might produce a single physics-accurate frame, but over a 5-20 second generation, errors would compound. ltx 2.3 maintains physical coherence from the first frame to the last.

Unified Multimodal Architecture

Instead of separate models for text, image, and audio, ltx 2.3 uses a single unified transformer. This architectural decision has massive implications:

- Better semantic understanding: The model interprets the relationship between your text prompt, reference images, and audio cues holistically, rather than processing each modality in isolation

- Faster generation: One model means fewer processing steps, resulting in faster render times — often 2-3x faster than multi-model approaches

- Synchronized audio: Sound effects, ambient noise, and dialogue are generated in the same forward pass as the video, eliminating the sync drift that plagues post-hoc audio matching

- Intent consistency: A single model interprets your creative intent once, preventing the semantic divergence that occurs when separate models each interpret the same prompt differently

The unified architecture also simplifies the developer experience. Rather than orchestrating multiple API calls to different model endpoints and then stitching the results together, developers work with a single pipeline that handles all modalities natively.

Sharper Fine Detail

A rebuilt latent space with an updated VAE (Variational Autoencoder) trained on higher-quality data means ltx 2.3 preserves:

- Fine textures and skin detail, including pores and micro-expressions

- Text readability within generated scenes — signs, labels, and UI elements remain crisp

- Edge clarity even at complex intersections where multiple objects overlap

- Hair strand-level detail across frames without the common "hair blob" artifact

- Material differentiation: glass looks like glass, metal like metal, fabric like fabric

The improved VAE also enables better color accuracy and dynamic range. Scenes with dramatic lighting — golden hour, neon cityscapes, candlelit interiors — maintain their intended color palette without the washed-out look that affected earlier models.

Real-World Use Cases

Short-Form Social Content

One of the most immediate applications of ltx 2.3 is generating short-form video for social platforms. The model natively supports vertical 9:16 format, meaning generated content is purpose-built for TikTok, Instagram Reels, and YouTube Shorts.

Creators report generating 10-15 usable social clips per hour with ltx 2.3, compared to 2-3 per hour with previous generation models. The key difference is the reduced need for manual quality filtering — a much higher percentage of generated output meets the quality bar for posting.

Product Visualization

E-commerce brands are using ltx 2.3 to create dynamic product showcase videos from static product photography. Upload a product image, add a descriptive prompt about the environment and camera movement, and ltx 2.3 generates a professional-quality product video.

The physics engine is particularly valuable here: products don't float, reflections are accurate, and material properties (glossy, matte, transparent) are rendered correctly. This gives potential customers a realistic sense of the product that static images cannot provide.

Film Pre-Visualization

Independent filmmakers are using ltx 2.3 as a pre-visualization tool, generating rough scene compositions before committing to expensive physical production. Instead of storyboarding with static frames, directors can now create moving storyboards that communicate camera movement, pacing, and atmosphere.

What This Means for Different Users

For Content Creators

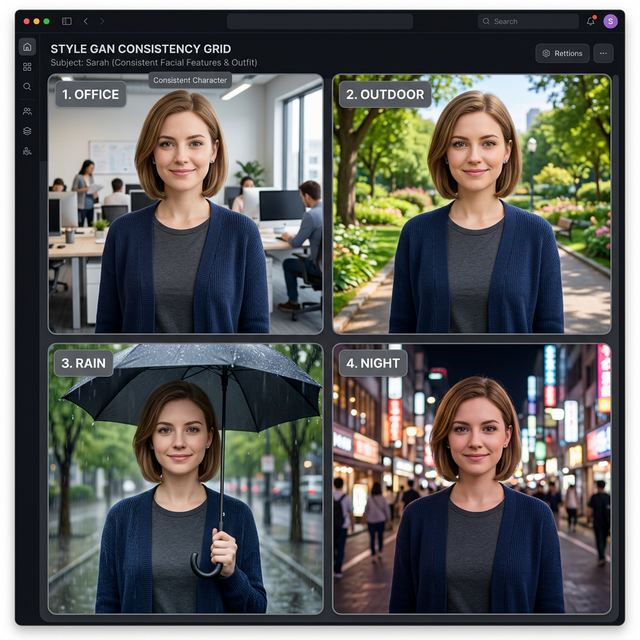

Independent filmmakers and content creators can now visualize complex scenes without a production budget. The physics engine means your AI-generated pitch deck actually looks like how the final film would feel. Character consistency features allow you to maintain the same characters across multiple scenes, enabling serialized content without continuity errors.

For Marketing Teams

Marketing teams can generate product videos that customers trust — because the motion looks real, not synthetic. The multimodal input means you can maintain brand consistency across hundreds of video variants. The speed of generation means you can test multiple creative directions simultaneously, letting performance data guide your creative decisions rather than gut instinct.

For Developers

With Apache 2.0 licensing and LoRA support, developers can fine-tune ltx 2.3 for specific use cases and deploy it in their own applications. The model is open-source and production-ready. The unified architecture simplifies integration — one API endpoint, one model weight, one inference pipeline — reducing operational complexity and infrastructure costs.

Key Specifications

| Feature | ltx 2.3 |

|---|---|

| Max Resolution | Up to 4K |

| Frame Rate | 24 or 48 FPS |

| Max Duration | 20 seconds (single pass) |

| Audio | Synchronized stereo |

| Input Types | Text, Image, Audio, Video |

| License | Apache 2.0 (open-source) |

| LoRA Adapters | Up to 3 simultaneous |

The Future of AI Video

ltx 2.3 represents a turning point in AI video generation. By solving the physics consistency problem at the architectural level, it transforms AI video from a novelty tool into a production-ready creative platform.

The combination of open-source accessibility, physics-aware generation, and unified multimodal processing means that high-quality AI video is no longer limited to well-funded research labs or large studios. Individual creators, small teams, and independent developers now have access to the same caliber of AI video technology.

As the model continues to evolve through community contributions and LoRA customizations, we expect to see entirely new genres of AI-assisted content emerge — from personalized educational videos to interactive storytelling experiences.

Audio Generation: A Game-Changer

One of the most underappreciated breakthroughs in ltx 2.3 is its synchronized audio generation. Previous AI video tools produced silent output, requiring creators to manually source and sync audio tracks — a time-consuming process that often produced imperfect results.

ltx 2.3 generates audio in the same forward pass as video. This means:

- Ambient sounds are matched to the visual environment automatically — ocean waves for coastal scenes, traffic noise for urban shots, wind for open landscapes

- Sound effects are timed precisely to on-screen actions — footsteps sync with foot placement, impacts match visual contact points

- Music scoring follows the emotional arc of the generated scene, adjusting tempo and intensity dynamically

- Dialogue lip-sync has improved significantly over previous models, with mouth movements closely matching generated speech patterns

For creators who previously spent 30-60 minutes per video finding and syncing appropriate audio, this single feature reduces post-production time by an estimated 70-80%.

Accessibility and Pricing

ltx 2.3 is designed to be accessible across a wide range of users and budgets:

Cloud Generation

For users without dedicated GPU hardware, ltx 2.3 is available through the cloud generation platform with simple per-credit billing. This removes the hardware barrier entirely — you can generate 4K video from a laptop, tablet, or even a phone.

Open-Source Self-Hosting

For developers and organizations that prefer full control, the complete model weights are available under the Apache 2.0 license. There are no usage restrictions, no API rate limits, and no recurring fees. Self-hosted deployment on a single NVIDIA A100 can process approximately 50 frames per second at 1080p resolution.

Desktop Application

The free LTX Desktop application provides a user-friendly interface for local generation. It's optimized for consumer GPUs (NVIDIA RTX 3060 and above) and handles memory management automatically, making it accessible to creators who aren't comfortable with command-line tools or cloud APIs.

Community and Support

An active open-source community provides shared LoRA adapters, ComfyUI workflows, and generation presets. Whether you're looking for anime-style LoRAs, cinematic color grading presets, or specialized motion profiles, the community ecosystem significantly extends ltx 2.3's out-of-the-box capabilities.

Getting Started

Ready to experience the difference? Try ltx 2.3 for free and see how physics-aware AI video changes your creative workflow.

For a deeper technical understanding, check out our architecture deep dive. If you're interested in marketing applications, read our guide to AI video marketing strategies.